Your AI Agent is only as good as what it learns

My day job is being an Engineering-Manager-slash-Tech-Lead. I started that in July 2025. The first half year my job was mostly managing humans and working on technical concepts and pitches. I had little time left for working on code. I started using Cursor to work on code and had Cursor rules in place (to instruct the AI on how to work with code, whata rules to follow, what not do do etc.) This worked ok.

I felt like I could start to focus on contributing technical work again.

It really changed once I was able to use Claude Code at work.

In this article I want to share what works best for me, and how the system I use to work on our products using Claude Code get smarter every day.

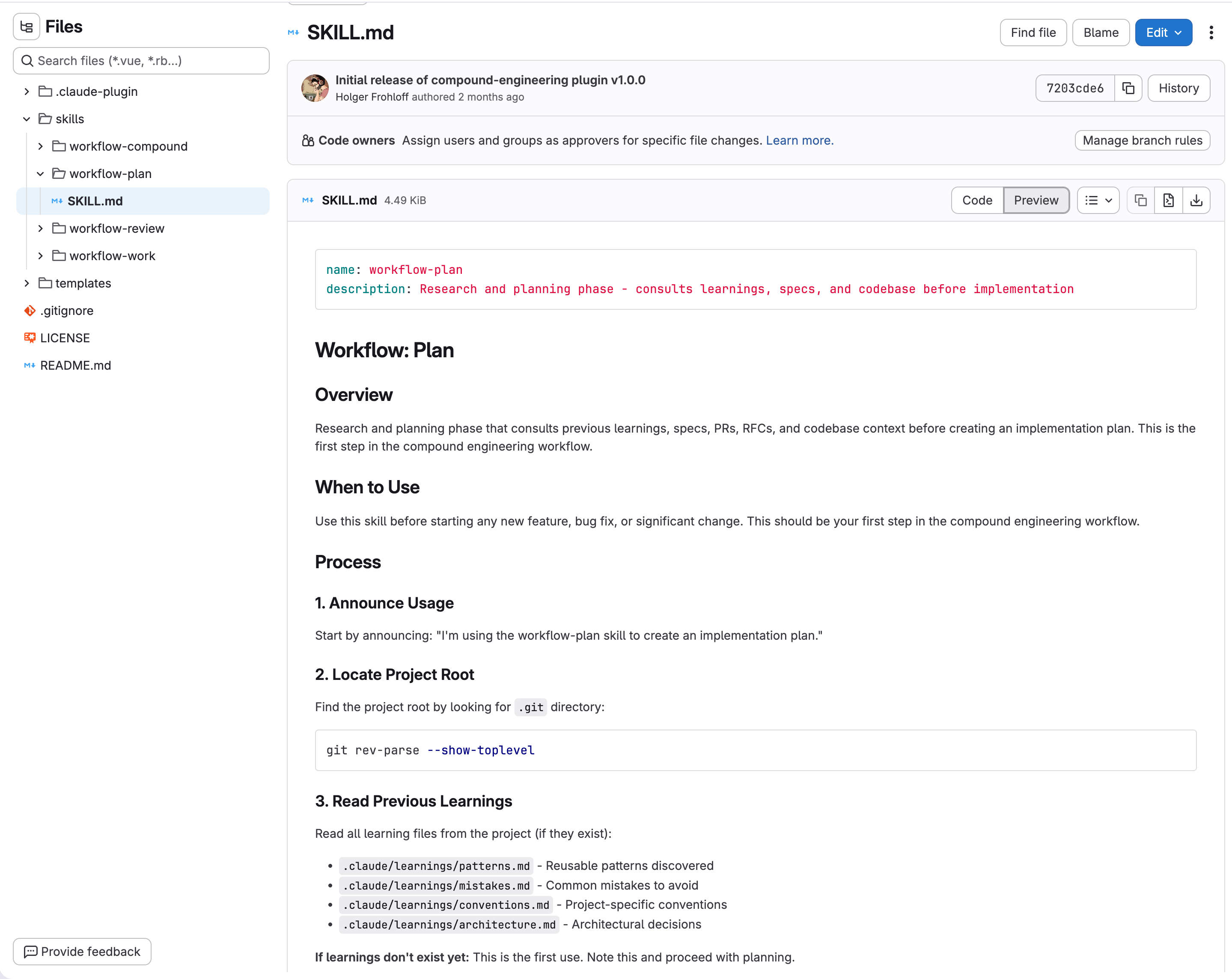

It all started, when I read this article from Will Larson Learning from Every’s Compound Engineering. I set out to create a reinforcement learning system for my agentic work. Every plan results in work done, which results in learnings captured. Those learnings then get used to improve the plans for the next work item.

I bet there is stuff to improve on here, but the results I get are good.

Merge Requests / Pull Request

Yesterday, while listening to Avdi Grimm’s podcast I thought about all the knowledge lost not transferred from code reviewers to me the AI. We do have thorough code reviews and sometimes errors, or architectural decisions impact the merge request significantly. Those discussions are only part of the MR and if you are not part of the discussion, you might not see it. The AI certainly does not.

That’s why I created a new skill, that combs through the last x merge requests and finds every relevant discussion by humans (and also by the AI review bot we have). It extracts the learnings and writes them to the project, so my compounding workflow can pick it up next time.

That’s it. Short and sweet. If you use AI agents to work on your code projects, make sure they get “smarter” every time.